🌕 Ex abrupto

A hostile alien civilisation has arrived with the intention of invading Earth. Originating from a planet with a turbulent environment, it is seeking a new home. However, the gravitational pull of their triple-sun system makes it challenging for the aliens to plan a successful invasion. In order to overcome this, they decide to infiltrate Earth’s society and culture, using sophisticated technology to send subatomic particles to spy on human activity and disrupt communication and technology. To combat this threat, humanity forms a covert initiative that selects four individuals to devise a strategy to repel the alien invasion. These people are granted unrestricted access to significant resources and are given freedom from government oversight. A sociology professor, as part of the group of the »chosen ones«, proposes a controversial theory called the »Dark Forest«. This theory asserts that the universe is a perilous place where civilisations must remain concealed to survive. Advanced beings, according to the theory, will eliminate potential threats before they become dangerous. Therefore, Earth should remain hidden from these unknown creatures and any other potential threats.1

The Dark Forest Theory is a sci-fi novel written by Liu Cixin and uses a hypothesis described by astronomer and author David Brin which postulates the possibility that numerous extraterrestrial civilisations exist in the vast expanse of the universe, yet they remain silent and cautious. The theory describes how any advanced being with the ability to travel through space would view other intelligent life forms as potential threats, resulting in the annihilation of emerging life that reveals itself. Consequently, the electromagnetic spectrum would lack activity, much like a »dark forest« filled with armed hunters.

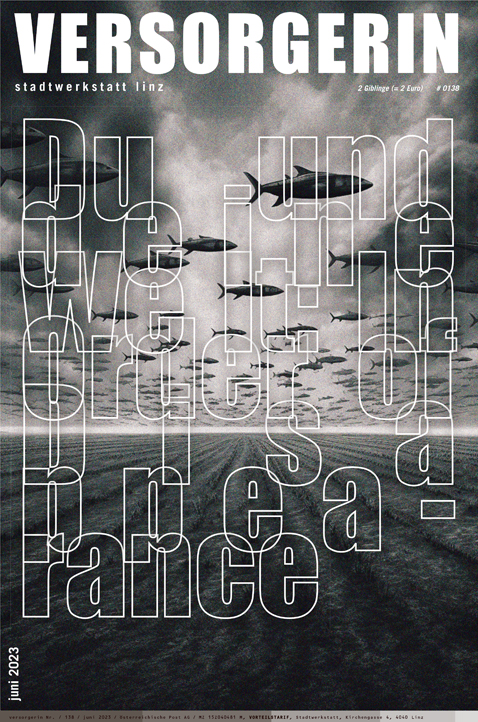

In a similar vein, researcher and media theorist Bogna Konior presents what she calls »The Dark Forest Theory of the Internet«, which draws parallels to the extraterrestrial hypothesis. This theory highlights the automated dynamics involved in internet communication, where the system has a tendency to maintain a high level of entropy, leading to conflicts. Users must make themselves known to signal »safe« sociality, but this legibility also exposes their personal information, making them vulnerable to attack and control. The more detailed users’ self-representations are, the easier they are to govern and target, creating a digital »dark forest« where individuals must tread cautiously.

»The internet is a dark forest. […] The internet is a tangible space, yes, but also a mental expanse. Made for sleepwalking, for a mundane delirium. For sacrificial rituals. People get lost in it by shining light in all the wrong places, exposing too much about themselves, communicating impulsively, recklessly.

There is only one, simple riddle to answer at the entrance to the internet: What’s on your mind? […] An invitation to communicate.«2

🌖 The invitation

The prevailing economic system that we are currently experiencing has a sharp focus on the collection and commodification of personal data for financial gain. In this system, Big Tech firms are gaining an unprecedented level of power and control over our decision-making processes and behavioural inclinations, all through the application of sentiment analysis. Surveillance Capitalism has led people to become prosumers –

a hybrid of producers, consumers, and products – by using them as free labor resources. The end result is nothing short of a total collapse of the private space, with even the most intimate of human experiences being used as raw material to extract valuable behavioural data.3

Specifically within the digital environments, made available to users through centralised social media platforms, we witness a clear radical shift in terms of power and control. If from one side we may believe, these platforms are just spaces for narcissistic delirium, from the other side, we are actually invited to communicate.

From a sociological standpoint, the operational structures of social media platforms manipulate the innate human need for self-determination, providing individuals with an ideal space to construct and cultivate their persona while receiving recognition from a vast audience that they may never have envisioned before. Through this process, by giving a sense of group and societal belonging, we witness a psychological shift where people not only voluntarily disclose their personal information but also become an integral part of a participatory surveillance system in which users themselves are objects of power, alongside the non-institutional agencies that operate on the Internet.

Sociologist Andre Jansson describes this as the »Culture of Interveillance«, a social phenomenon where individuals actively monitor and regulate each other’s behaviour in a mutual and reciprocal manner. Unlike traditional forms of surveillance, which are often associated with hierarchical power structures or governmental control, interveillance is characterised by a more balanced power dynamic, where each party has an equal degree of monitoring and regulating power.4 Examples of manipulative features within social media platforms are the »disappearing« pictures within applications such as Snapchat, or the Stories implementation within Instagram, Facebook and TikTok. These features have instilled a general misconception, in which – when data (photo, video or text) disappear –, information is actually deleted, while in fact, it is stored within the databases of these platform. As a result, the idea of disappearing content, enforced and enabled a more prominent production of data that is considerably more intimate and personal than ever before.

Moreover, augmented reality implementations such as face filters pushed up the production of data related to face tracking and sentiment analysis, by adding a playful addition to the camera. Face filters have also had influence as marketing tools, implemented within dedicated platforms such as Lens Studio, Meta Spark and TikTok Effect House, that provide proprietary tools to the creators for the development – as well as analytics related to performance metrics (such as engagement and target audience) – of filters.

Often unaware, users become an active part of these hidden power dynamics that are no longer based on control and repression of bodies,5 but on prevention through the promotion of beliefs and habits that take advantage of processes of identification, and that manifest themselves in the form of viral trends. Social media algorithms are designed to push specific agendas, on the one hand empowering people to speak up and feel in control of the main discourse in a sort of digital parrhesia6, on the other hand, they encapsulate people in macro bubbles that lead to extremist ideologies and points of view which may be harmful to individuals and society as a whole.

As a result, in the pursuit of defining themselves within society, individuals often end up embracing somebody else’s agenda.

The dynamics of TikTok, currently the most popular social media platform, demonstrate this more evidently than ever. The app allows users to create, share, and discover short-form videos, in an environment in which people – the majority of users are youngsters who are still in the process of developing their self-image – are driven by the desire to replicate specific patterns that go viral in order to achieve engagement and success. These tendencies involve the mimicry of video trends, often using the same music, and therefore conforming to standards set by the community, promoting and reinforcing certain ideologies that reflect the platform interests.

🌑 Exit

Through an awareness of our relevance within these structures, we can either take a critical stance by triggering subversion techniques aimed at disrupting and upsetting the everyday use of the platform, for instance feeding the entropic void of information with inaccurate data to the point that these become unreadable and useless; or the other way around, we can decide not to engage with these dynamics, and quietly hide among trees.