A small but prolific community challenges and subverts the preconceived notions of high-performant, westernized, and English-spoken rules of computation, so pervasive and enmeshed in the routine of the computer operator, they end up unnoticed. By incessantly pushing the boundaries of the limits of computation, representation and logic systems, esolangs offer glimpses of how radically different computation could have been – and may still become.

In programming, source codes have an inherent double purpose: they should be readable both by humans and machine, which happens at different abstraction levels involving parsing, compiling or interpretation. The catch is that the simplest, most straightforward and multifunctional PLs could seem like the best approach, but their readability and logic are terribly complicated for the human counterparts, and so began the quest for creating and improving high-level PLs for more specific uses. That was the gateway for embedding the English language as the lingua franca of programming, in which »paradoxically increasing accessibility […] to those who know English, can raise obstacles to others«.[1]

»The computer compels compliance«[2] at their very core, turning the simple choice of programming without any English element a critical and political statement by itself – and an incredibly difficult one. In the 70s, the Chinese language had its existence threatened by the introduction of digitalization, as the first computers prioritized Latin characters encodings baked directly into their architecture. In addition, their extremely limited memory available at the time made the digitalization of the at least 2000 simplified Chinese characters – and their subsequent inputting via QWERTY keyboards – a herculean task.[3]

Contrastingly, descending to low-level into assembly or even machine code, the programmer is close to the barebone logic of manipulating strictly numerical instructions. The class of low-level, minimalistic PLs are known as ‘Turing Tarpits’, a category of PLs that are overly-simplified, but still Turing Complete, which makes them incredibly hard to use. Turing-completeness (TC) is a fundamental concept of computation universality introduced by Alan Turing and defines a machine which is theoretically capable of executing any computable task, given infinite time and memory, which includes simulating any other Turing machine.[4] Although not required in an esolang, TC is a feature that many strive for as the ultimate proof-of-concept. Its demonstration is part of the reason behind the popularity of brainfuck, as it proved to be robust enough with minimum features. Created in 1993 by Urban Müller, brainfuck’s main characteristic was to attain TC with only 8 discrete commands and a tiny compiler, manipulating the memory of a machine directly. Its name implies the insane level of difficulty, acknowledged by its creator in the project’s read.me file with a defiant note: »Who can program anything useful with it? :)«.[5]

This unique combination of humour and ‘technomasochism’[6] is frequent in esolangs, including the early INTERCAL with its parodies to contemporary PLs and sci-fi literature. This playful aspect is intensified in the multicoding category, with languages producing more than one meaning. In Piet, created in 2001 by David Morgan-Mar, the programs don’t involve words and look like abstract generative art, but it is »the reverse of generative art: the work is hand-made to fulfil the rules of the language.«[7] In Chef, also Morgan-Mar’s, the contravention goes a bit further, escaping the digital realm: the program mimics a cooking recipe syntax, resulting in examples such as a ‘Hello, world’ program that could also be read as a normal chocolate cake recipe.[8]

Amidst so many options, the drive to find a universal natural language that could unify natural communication has been permeating humanity’s imaginary for many centuries. Part of this drive has been translated to the topics discussed here, as Leibniz was a pioneer figure in theorizing a »kind of ‚computational imaginary‘ — reflecting on the analytical and generative possibilities of rendering the world computable«.[9] He believed that the ultimate language, or ‘characteristica universalis’, would be possible by adopting logic calculation as its basis. While his discourse is often deemed as absurd facing the intrinsic inconsistency and ambiguity of natural languages, it serves as an epistemological provocation much comparable to the absurdity of some esolangs. Machines are indifferent to the ways humans manipulate their voltage differences, so different syntaxes are purely a thematic and aesthetic decision. The act of refusing pre-existent computational rules, however, is what really pushes the inner workings of such systems to new implementations of logic, causality and forms of thinking.

An example of this shift in computational thought is the proposal of Noneleatic Languages by Evan Buswell, a work-in-progress since 2015 that radically questions the very basis of mainstream programming by negating conditional branch statement and variables to introduce languages that »imagine code and state together«.[10] The class of ‘fungeoids’ is another mind-bending example, involving ‘Instruction Pointers’ with a sense of n-dimensional direction. They were introduced in 1993 with Ben Pressey’s Befunge, a bi-dimensional esolang that was recently taken to the extreme with the recent Dimensions – with 52. It is true that those esolangs are never widely used, because of their lack of practical uses or extreme technical complexity – or even because they fit into the category of completely immaterial, non-experiential thought-experiments.[11]

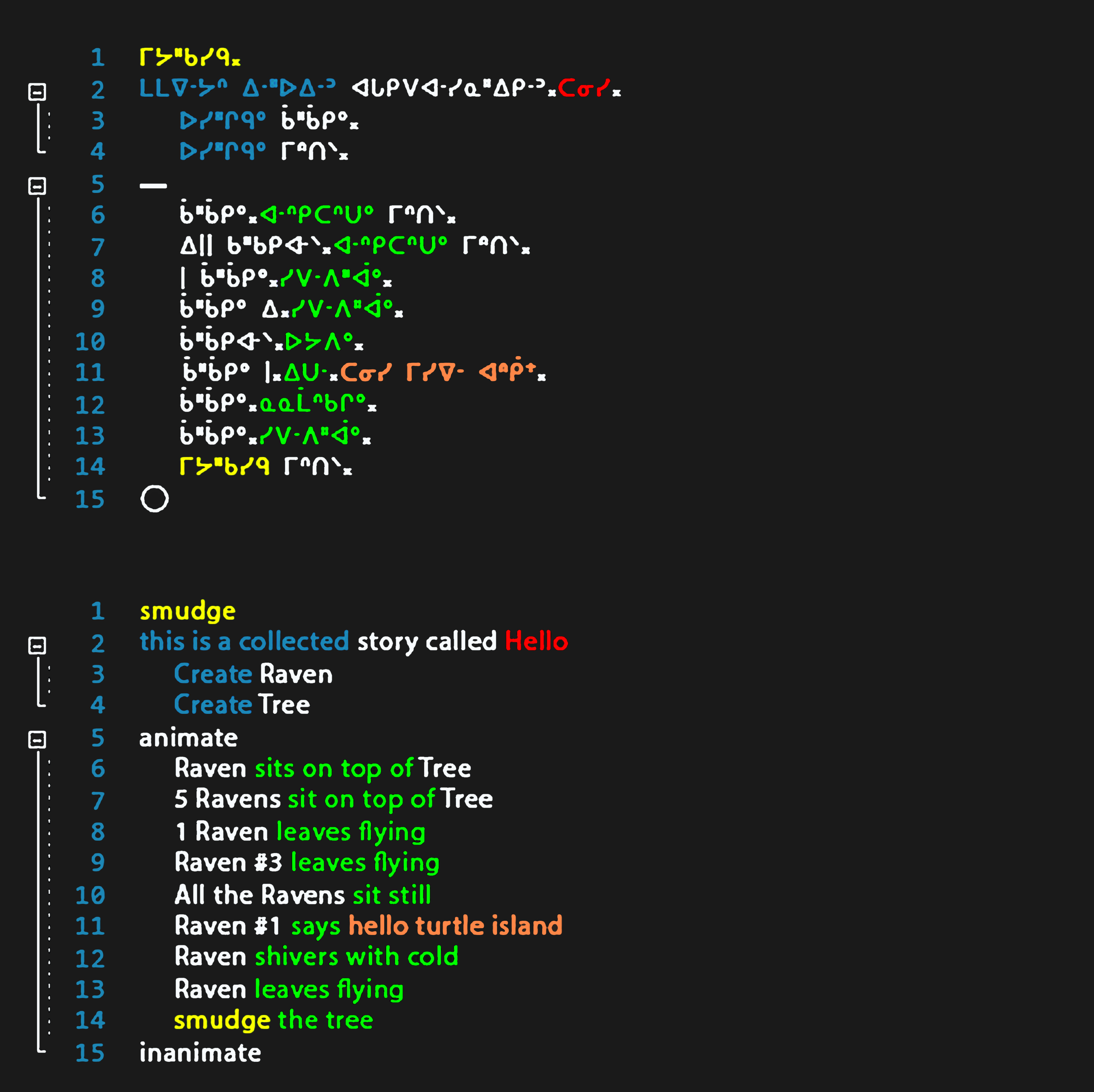

However, esolangs are not always recent inventions, but ancient knowledge finally applied to Computer Science. More than performing code in a non-English language, Jon Corbett’s Cree# and Ancestral Code languages involve taking a step further and »extend computing languages as tools for language revitalization, cultural ‘maintenance and preservation«.[12] By embracing Indigenous culture, lexicon and unique mathematical structure, Corbett emphasizes the hubris of western thinking in limiting thought itself, a problem Ramsey Nasser also encountered when creating قلب, written entirely in Arabic. Nasser emphasizes the importance of implementing his language to find untransposable limitations and bugs triggered by the inconsistency of non-Latin character encodings, which wouldn’t be possible in a purely speculative project.[13]

Many principles of the practice of esolangs resonates with queer code studies, as »the notion of queer code is both the subject and the process of the work, and this operates on multiple levels, ‘queering’ what would be considered to be the normative conventions of software and its use«.[14] By refusing the mainstream to propose a »multiplicity of ‘found’ ontologies«[15] is a great way to shift the westernized, sexist and heteronormative »focus on stable machines, stable instruments and stable knowledge«,[16] alongside with the dualistic view that only endorses efficiency and usability in computing – if something doesn’t work as intended, it cannot be promptly streamlined into the relentless capitalist mode of production, and is therefore irrelevant.

Interestingly, the weirdness of most esolangs is what ends up preventing them of becoming just another forgotten and obsolete programming language, »because they do not attempt to be usable. Instead, they can often be appreciated like a piece of art, much like an oil painting can be admired for hundreds of years.«[17] They are the manifestation of investing fully and exercising creativity and subversion within computation, valuing heterogeneous entities and agencies over a straightforward, emotionless journey from A to B.

---

For the 2021 project »Conversations with Computers« servus.at hosted a series of presentations about esolangs and human machine (or AI) interaction. The recordings from the symposium will be soon available under cwc.radical-openness.org and core.servus.at.